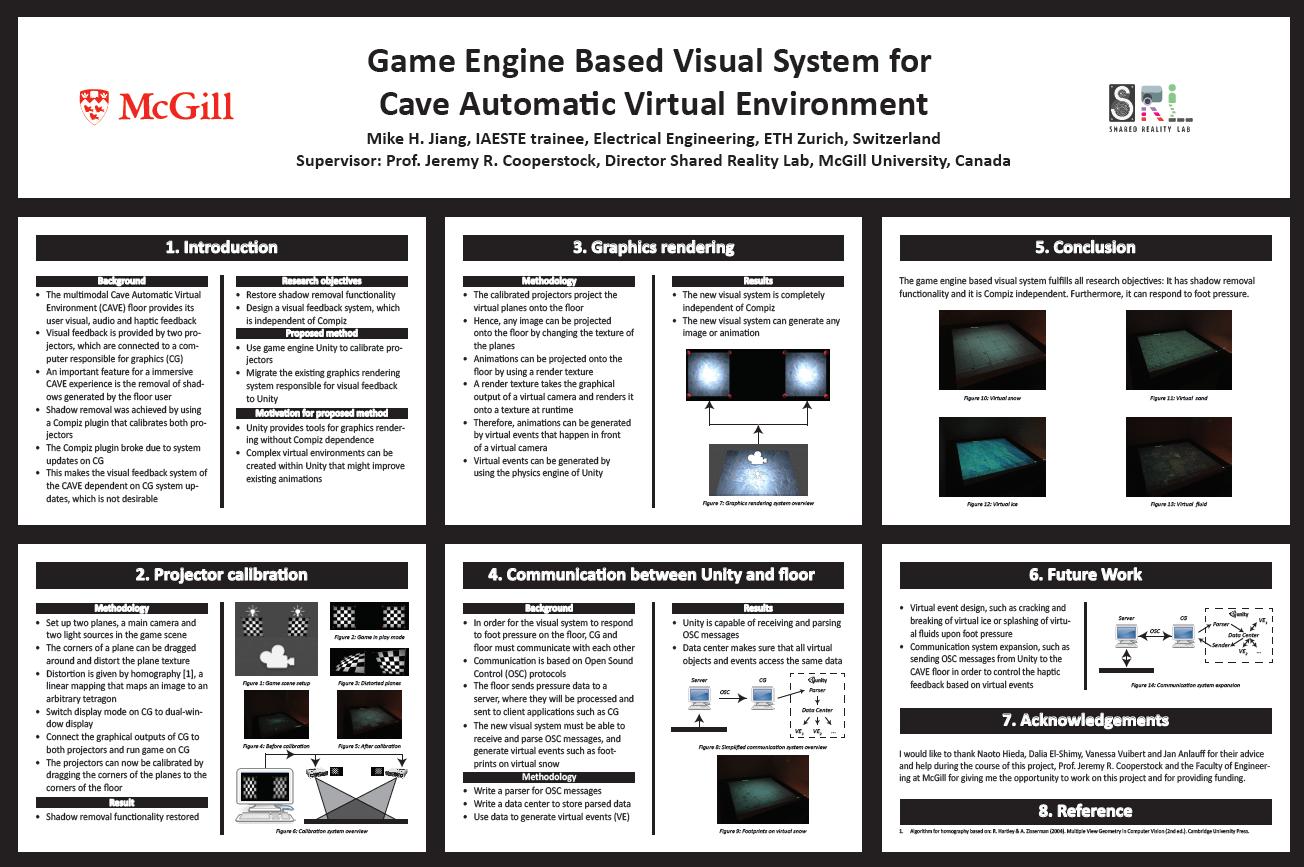

During summer 2014, Mike interned at the Shared Reality Lab at McGill University. When he arrived, the Compiz-based software projector calibration system for the haptic floor of their multi-modal CAVE was broken. Mike replaced that calibration system with an Unity-based system, and reengineered the visual channel and visual-haptic interfaces of the CAVE. As a result, projectors could be calibrated, and responsive virtual worlds (e.g. landscapes containing forests, lakes, snow, ice, particle systems) could be displayed again. Even footprints could be displayed after stepping on the floor.

Summer Undergraduate Research in Engineering (SURE) Program Poster (~80MB)