Summary

How something is presented can be as important as the message itself.

In the age of political polarization and election meddling, it is of vital importance to understand which factors contribute to the formation of public opinions. Television is one of the main sources of information for a large portion of the general population.

In order to understand discrepancies in news perception, what they are caused by and which implications they might have on shaping public political debate, we first need to understand how television news are constructed and define presentational aspects of the news. Given that, we can build tools that analyze news consumption by the public.

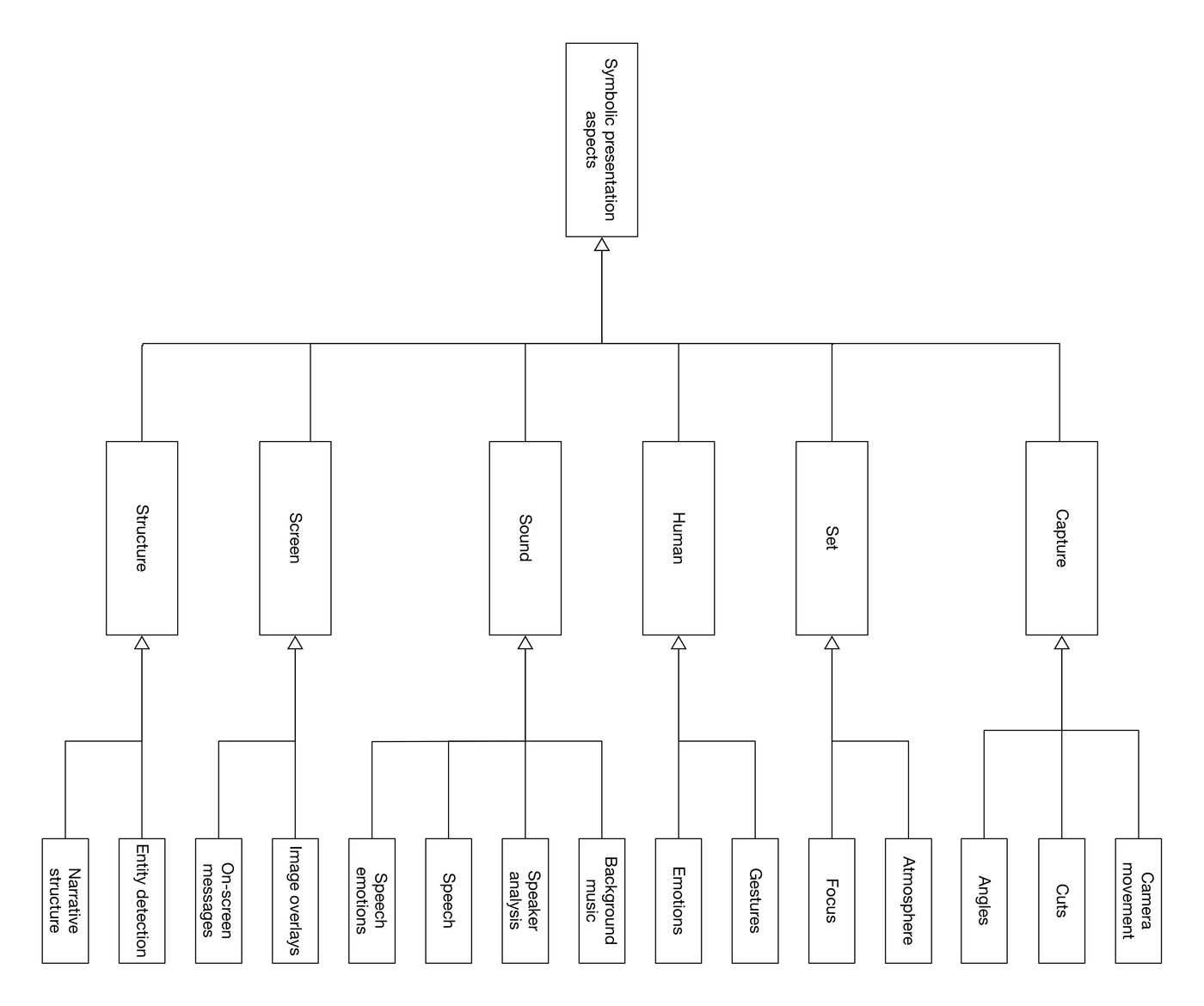

We aim to use various artificial intelligence techniques to model the “subcarriers of information” present in a TV newscast, to automatically detect and understand visual and auditory cues beyond the spoken word including the layout of the set, the affect of the participants, the nature of the motion, and other cues. Our goal is to develop an algorithmic understanding of journalistic choices in the way news content is presented. We also attempt develop an understanding of higher-level characteristics of television news such as television set atmosphere or political bias. This altogether would enable a broad-range, comprehensive algorithmic analysis of how news presentation is trying to shape the public political debate.

Contributions

Mike helped Veronika Eickhoff with her thesis and co-led 3 MIT undergraduates to develop a set of modules extracting facial expressions, sentiment of news scrolls, story segmentation and foreground-background separation. The text above is taken from Veronika’s work.

Skills

Python, IBM Watson NLP, Google Cloud Vision API, Affectiva SDK, MongoDB, ffmpeg, Kubernetes, Docker, AWS, Amazon Mechanical Turks, Leadership.